0:00

The End of Traditional Coding: A Seismic Shift

You know, it's it's funny to think about how we used to build software.

Oh yeah.

0:05

Speaker 2

Completely different world.

0:06

Speaker 1

Right.

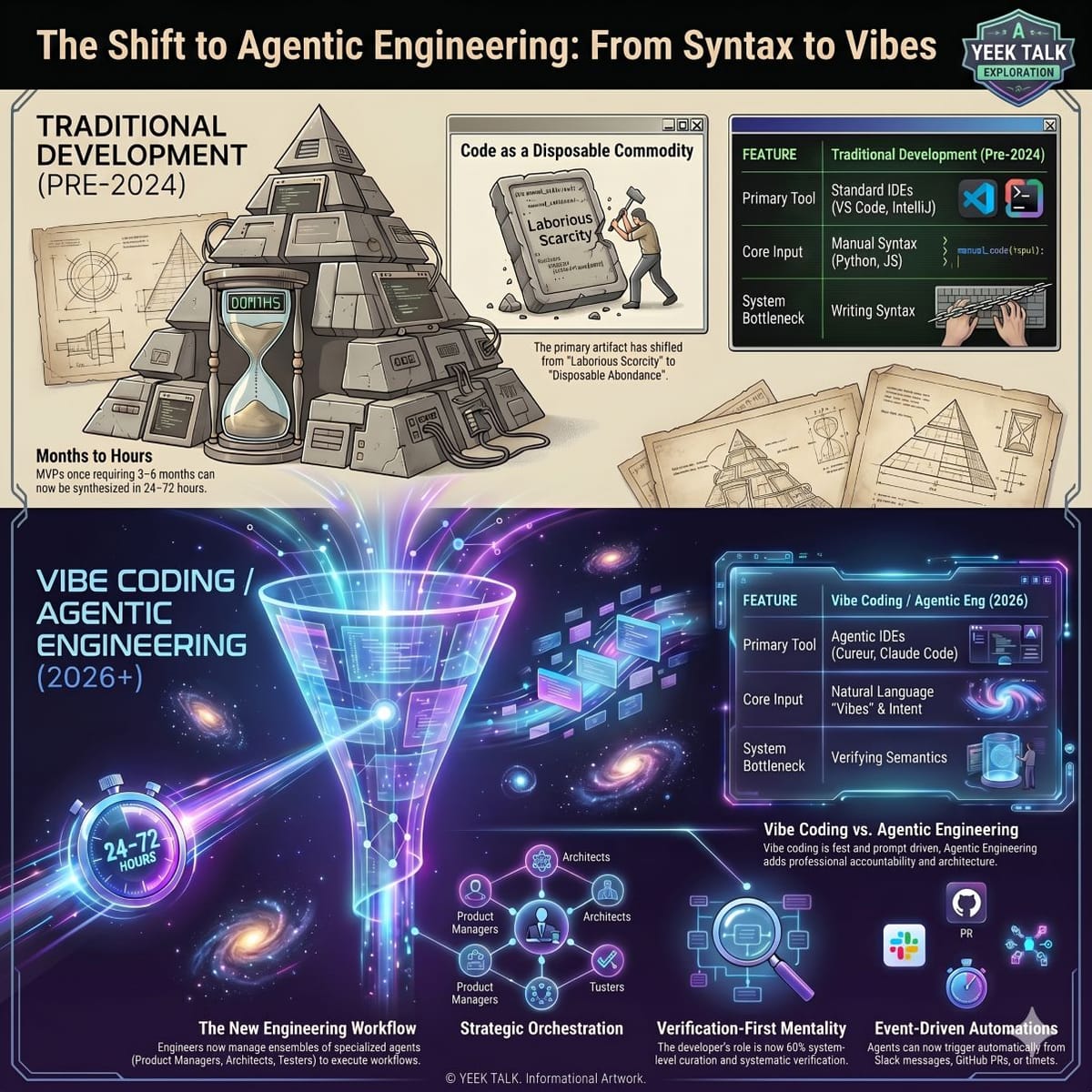

Like if you were sitting in a product meeting back in say, 2024 and you wanted to launch a completely new minimum viable product, that road map was practically carved in stone.

0:17

Speaker 2

Oh, absolutely.

You're talking what, three to six months of development time at?

0:22

Speaker 1

Least and you're hiring a full stack team, you're paying upwards of $150,000 just in developer salaries alone.

It was this this incredibly heavy, expensive machine.

0:34

Speaker 2

Yeah, the overhead was massive.

0:35

Speaker 1

But here we are, it's 2026 and the reality of the software industry has just completely fractured.

Welcome to the deep dive everyone.

Today we are looking right at the Spocalypse.

0:45

Speaker 2

It really is this apocalypse.

The market impact has been this massive tectonic shift because you know, we are now looking at that exact same MVP being built in 24 to 72 hours.

0:56

Speaker 1

Which is just wild to even say out loud.

0:58

Speaker 2

It is.

The financial barrier to entry has essentially collapsed.

It dropped from 150 grand down to like the price of a few API credits.

We're talking fractional pennies per computational request plus the salary of a single prompt architect.

1:15

Speaker 1

It is a staggering comparison, and it's exactly why we are doing this deep dive for you today.

We've got this massive stack of sources in front of us.

1:23

Speaker 2

Lots of really dense material to get through.

1:25

Speaker 1

Yeah, we're talking academic papers from the IEM conference, cutting edge research on automated Co reviews, riplet documentation, and essays from industry veterans, and our mission today is to figure out exactly why AI agent software engineering has entirely replaced traditional software development for you and your teams.

1:43

Speaker 2

Right, because we really need to look past the surface level hype of AI runs code, we have to examine the mechanical shifts.

The focus has to be on how the actual architecture of development changed under the hood to allow this kind of speed.

1:54

From Monolithic Models to Collaborative AI Teams

Okay, let's unpack this.

We are going to take you on a journey through this paradigm shift.

We'll explore how we moved away from simple AI auto complete, how our development environments completely morph to support autonomous AI teams, and most importantly, what this all means for your role as a human in this new landscape.

2:15

Speaker 2

The best place to start is with the architecture itself.

I mean, the reason traditional development is dying isn't just because the underlying AI models process tokens faster or memorize more documentation.

2:26

Speaker 1

Right, it's not just a bigger brain.

2:28

Speaker 2

Exactly.

The fundamental shift is that the AI got collaborative.

We moved from single model applications to multi agent Systems or MAS.

2:37

Speaker 1

I like to think of the early days of AI coding, like when you just ask a single massive language model to write a complex script for you.

Like watching a one man band trying to play a Beethoven Symphony.

Oh.

2:48

Speaker 2

That's a great analogy.

2:49

Speaker 1

Right.

It's impressive.

For about a minute the guy is kicking the drum, blowing the harmonica, strumming the guitar, but eventually the complexity is just too much and he drops the cymbals.

He.

2:57

Speaker 2

Absolutely drops the cymbals.

2:59

Speaker 1

And in the AI world, dropping the cymbals meant maxing out your context limits, dropping critical logic threads, and just flat out hallucinating variables that didn't even exist.

3:08

Speaker 2

What's fascinating here is that the industry realized throwing more compute at a monolithic model was a dead end for complex workflows.

A single model physically cannot hold the context of an entire enterprise code base.

Write the new feature, build the test suite, and review its own logic all at once.

3:27

Speaker 1

The processing window just gets totally saturated.

3:30

Speaker 2

Right, so the solution was to mirror human organizational structures.

Frameworks like Crew AI and Land Graph were built specifically to orchestrate teams of specialized AI personas.

3:41

Speaker 1

So instead of 1 AI trying to be the full stack developer, the QA tester, and the project manager all at once, you break those roles up.

3:48

Speaker 2

You compartmentalize.

3:49

Speaker 1

Exactly.

The sources give this really compelling example from a Landgraf tutorial about building a research assistant.

They didn't just build 1 massive bot, they built 4 distinct agents.

3:59

Speaker 2

The writer, a researcher, A critic and a coordinator.

Yeah.

4:02

Speaker 1

And the real magic happens when they add friction into that workflow, right?

4:06

Speaker 2

Yes, the friction is key.

The critic agent is designed to actively look for flaws by having an agent solely dedicated to providing structured feedback, and they specifically use Jason for this.

A lightweight data format that guarantees A predictable structure.

4:22

You introduce a mechanical check and balance.

4:24

Speaker 1

So it's not just politely suggesting edits.

4:27

Speaker 2

No.

The critic evaluates the researchers findings, parses the Jason to verify every single citation is valid, and forces a rewrite before the writer even touches the data.

It mechanically enforces peer review.

4:38

Speaker 1

Wow and Crew AI takes this compartmentalization even further.

There's an example in the sources of building a ChatGPT clone entirely from scratch.

They don't just assign vague roles.

4:49

Speaker 2

They pair highly specific agents with highly specific sandbox tools.

4:53

Speaker 1

Right, so you have a search agent that is exclusively handed the web searching tool, and you have a scraper agent that only knows how to extract text from a URL.

They literally cannot do each other's jobs.

5:02

Speaker 2

That strict boundary is the secret to their reliability.

When you restrict an agent's domain and remove unnecessary tools from its environment, you drastically reduce the mathematical probability of it hallucinating or going off script.

5:16

Speaker 1

That makes total sense.

5:17

Speaker 2

Furthermore, Crew AI utilizes YAML configuration files for this setup.

YAML is essentially just human readable text formatting.

5:26

Speaker 1

Which is a massive shift, right?

5:27

Speaker 2

Huge.

It means a product manager can open a basic text file, adjust an agent's goals, tweak its back story, or restrict its tool set without ever touching the core Python logic running the system.

5:38

Speaker 1

But OK, if we have 4 or 10 or 50 of these smart AI personas collaborating, putting them in a standard VS Code window is like trying to fit a corporate boardroom into a phone booth.

5:50

How IDEs Became Self-Healing Automated Workspaces

It really is.

5:51

Speaker 1

They need their own space to talk and execute commands.

Is that why tools like Cursor 3 and Agentic ID ES completely took over the market?

5:59

Speaker 2

That spatial limitation was the exact catalyst traditional integrated development environments.

You know your standard code editors were built for human fingers typing sequentially on a keyboard.

6:08

Speaker 1

Line by line.

6:09

Speaker 2

Exactly.

They simply were not engineered for multiple AI agents simultaneously scaffolding databases in the background, writing back end logic, and spinning up isolated test suites all at the same time.

6:22

Speaker 1

I have to push back a little here though.

OK go for it.

I'm looking at my editor right now and when I hit tab it completes the line for me.

I've used GitHub copilot.

Isn't this new wave of agentic IDE's just advanced auto complete?

6:37

Like it's still fundamentally waiting for me to provide the initial keystrokes, right?

6:41

Speaker 2

That is the most common misconception, but it's severely underestimates the third era of software development.

We have completely moved past active line by line assistance.

6:49

Speaker 1

Wait, really?

6:50

Speaker 2

Oh absolutely.

The IDE has morphed into a background automated workspace.

The agents live and operate inside the terminal and the file system, often without your immediate input.

You aren't typing anymore, you are supervising.

Huh.

7:03

Speaker 1

The features in cursor three really highlight that supervisory rule.

I guess they introduce something called agent tabs.

7:09

Speaker 2

Yes, agent tabs are brilliant.

7:10

Speaker 1

Just like you have multiple browser tabs open for different websites, you now have tabs for different agents working on entirely different tasks side by side.

You might have one tab where an agent is migrating a database and another where a separate agent is updating CSS files.

7:29

Speaker 2

And you can take that a step further with their environment handoff feature.

This fundamentally rewired the daily routine of an engineer.

7:37

Speaker 1

How so?

7:38

Speaker 2

Well, you can assign a deeply complex multi file refactoring task to an agent on your local machine.

Because that process might take hours of compute time, you can push that running agent state to the cloud, shut your laptop and go offline.

7:51

Speaker 1

Just completely walk away.

7:52

Speaker 2

Exactly.

The agent continues executing terminal commands, running tests, and pushing commits in a virtual container.

When you reconnect the next day, the entire branch is finished.

8:02

Speaker 1

That completely blows my mind.

And it's not even just tasks you manually assign before you log off.

The sources detail these event driven architectures where you are involved at all.

8:11

Speaker 2

Right, the automations are incredible.

8:12

Speaker 1

Like an agent can be triggered purely by a web hook.

A customer submits A pager booty alert at 3:00 AM.

An agent automatically wakes up, reads the stack trace from the server logs, replicates the environment, and initiates a bug fix while you were sleeping.

Yep.

8:27

Speaker 2

Or a Slack message for marketing can trigger an agent to update the public documentation.

8:33

Speaker 1

Replit AI operates on a similar autonomous level with what they call the agent loop and self healing capabilities.

Let's say an agent writes a piece of code and tries to deploy it, but the build fails.

8:43

Speaker 2

Historically, a human would have to read the red error text, search stack overflow, and just sort of guess the fix.

8:49

Speaker 1

But not anymore.

The self healing agent intercepts that standard error output.

It mathematically maps the error trace back to the exact line in the abstract syntax tree of the code, writes A targeted patch, and reruns the deployment command.

9:03

Speaker 2

It iterates in a closed loop until the green light flashes.

9:06

Speaker 1

But wait, I hear you saying the AI patches its own bugs, but how does it not just guess infinitely and bankrupt my entire API budget?

If it keeps trying and failing?

Couldn't I wake up to thousands of dollars in server costs?

9:19

Speaker 2

That is a very real fear, and it raises an important question about resource management in this new paradigm.

Platforms like Replit operate on what the sources call a credit economy.

Every single API call the agent makes, every time it asks the model to read a file or write a patch, it costs a fraction of a cent.

9:37

Speaker 1

Usually that's incredibly cheap compared to a human developers hourly rate, right?

9:41

Speaker 2

It is, but the risk of an overconfident AI getting stuck in an infinite debugging loop is very real.

It tries a fix, breaks a dependency, tries to fix the dependency, breaks the original file, and repeats.

9:54

Speaker 1

Oh man, it's just spinning its wheels.

9:56

Speaker 2

Right, and it will burn through your API credits with terrifying speed.

The autonomy is incredibly powerful, but it absolutely requires hard coded guardrails and budget caps.

10:06

Speaker 1

Which brings us to the most critical transition here.

10:08

Enforcing Rigor with AI Supreme Courts and Lang Graph

If you have these powerful Ides running autonomous teams of agents that can write, test, and deploy code at lightning speed, how do we stop them from breaking production?

10:18

Speaker 2

That is the $1,000,000 question.

How do you?

10:20

Speaker 1

Prevent total unmitigated chaos when machines are iterating faster than you can even read.

10:25

Speaker 2

You have to force strict mathematical boundaries onto these AI workflows.

We are taking the structured software development life cycle, the SDLC phases humans used for decades, like requirements, design, implementation, testing, deployment and mapping them into agent behaviors.

10:44

Speaker 1

Here's where it gets really interesting.

When you apply this rigid structure specifically to code reviews, you essentially end up creating an AI Supreme Court.

10:52

Speaker 2

That is exactly what it is.

The research paper from Trip O outlines a multi agent system exactly like this.

They recognize that relying on a single AI to review thousands of lines of code is inconsistent.

It misses context.

11:05

Speaker 1

Because it's doing too much.

11:06

Speaker 2

Right, so they built a system with four highly constrained domain specific justices.

11:10

Speaker 1

You have the readability agent, the refactoring agent, the performance agent, and the security agent.

They all independently comb through the same piece of new code without talking to each other.

11:19

Speaker 2

And their prompts are hyper focused.

The performance agent physically cannot care if a variable is named poorly.

It's only directive is to hunt for inefficient data structures like nested loops that will spike memory usage.

11:31

Speaker 1

And the security agent is only looking for exposed credentials or injection flaws.

But the obvious problem is what happens when these agents flag overlapping issues.

11:41

Speaker 2

Yeah, that's where it gets tricky.

11:42

Speaker 1

Right.

If the security agent flags a memory leak, and the performance agent flags an infinite loop that causes a memory leak.

You don't want 2 separate bug reports for the exact same problem.

11:53

Speaker 2

Enter the consensus agent, the Chief Justice.

11:55

Speaker 1

Yes, the Chief Justice, the consensus agent, utilizes A framework called EMCS Evaluation Matrix Consensus Scoring.

It doesn't just read the text of the bug reports.

What does it do?

It strips away the variable names and analyzes the underlying logic trees.

It merges semantically similar issues so the human isn't bombarded with duplicates.

12:15

Then it calculates a final score based on a matrix of confidence, interagent agreement and severity.

12:20

Speaker 2

So the system mathematically understand that a sequel injection vulnerability flagged by the security agent is vastly more critical than like a minor indentation warning for the readability agent.

12:31

Speaker 1

Exactly, and it prioritizes the final output report for you based on that.

That context awareness is a massive leap over traditional static analysis tools.

Older static tools are so rigid they look for exact dictionary style pattern matches.

12:45

Speaker 2

And they just create constant false alarms.

Yeah, if you use a variable name that looks slightly unsafe, they flag it.

12:51

Speaker 1

Right.

But this AI Supreme Court actually understands the intent of the code.

It filters out the noise and provides synthesized actionable verdicts.

13:00

Speaker 2

And we can orchestrate this entire SDLC process using graph frameworks like Lang Graph.

The IEOM 2025 paper details exactly how to map an entire development cycle into a mathematical.

13:12

Speaker 1

Graph break that down for.

13:13

Speaker 2

Us sure.

In a framework like Lang Graph, each phase of the SDLC becomes a node.

Think of a node as a computational function where an agent or a team of agents lives.

The transitions between these phases become edges, the pathways that pass data from one node to the next.

13:27

Speaker 1

So if we are using an incremental or spiral development model, we don't just go in a straight line from requirements to deployment.

The graph allows the workflow to loop back on itself safely.

13:37

Speaker 2

The power of the graph lies in conditional edges.

Let's say the implementation node writes the code and sends the data payload over an edge to the testing node.

If the testing node detects a failure, a conditional edge automatically reroutes the entire workflow back to the design or implementation node.

13:56

It acts as a physical barrier.

13:58

Speaker 1

It ensures that mathematically no code payload is allowed to reach the deployment node without successively passing every single validation check in the graph.

14:08

Speaker 2

Precisely.

14:08

Speaker 1

OK, so if we take a step back and look at this machine we've built, we have agents planning the architecture, agents writing the syntax, agents testing the payloads, and a Supreme Court of agents reviewing it by consensus.

14:19

Speaker 2

It's an an incredible system.

14:21

The Human Role: Orchestration, Verification, and Judgment

It is, but it leads to the obvious elephant in the room question for you, the listener.

Are you engineering yourself out of a job?

If I'm not writing the loop, what am I actually doing at my desk at 9:00 AM?

14:32

Speaker 2

If we connect this to the bigger picture, there's a fascinating paper titled Rethinking Software Engineering for a Genetic AI Systems that addresses this existential dread directly.

14:42

Speaker 1

What's their take?

14:43

Speaker 2

The core thesis is that the human bottleneck in software development hasn't disappeared, It has fundamentally shifted up the abstraction ladder.

14:51

Speaker 1

Because the bottleneck used to be writing the syntax, just sitting there trying to remember exactly how to type out a generic Python function or configure AC plus plus pointer perfectly.

15:02

Speaker 2

Exactly.

And syntax mastery is officially an abundant commodity.

The machine can type faster and more accurately than any human.

The new bottleneck is verifying semantics.

15:13

Speaker 1

Does the code actually solve the deeply specific business problem?

Does it integrate cleanly with the legacy systems?

15:21

Speaker 2

Right, the engineers role has elevated from manual authorship to strategic orchestration.

15:26

Speaker 1

Simon Willison, a really prominent developer in this space, wrote a great piece where he coined the term Vibe Engineering.

15:32

Speaker 2

I love that term.

15:33

Speaker 1

It's so good, he says.

Working with these highly autonomous coding agents is less like traditional programming and more like a weird form of management.

15:41

Speaker 2

It's an incredibly accurate description of the new workflow.

You are managing an AI team exactly how a director manages human teams.

The sources outline a completely new set of core competencies for engineers.

The first step of your morning isn't typing its intent articulation.

15:59

Speaker 1

So you have to translate what the stakeholders want into machine actionable directives.

If you give an agent a vague prompt like build a secure back end, the AI will build something but it's going to hallucinate the details.

16:12

Speaker 2

Without a doubt, you have to specify the exact encryption protocols, the database schema logic and the precise API endpoints.

16:20

Speaker 1

And because the AI will inevitably misinterpret some of those directives, the second competency naturally becomes systematic verification.

16:27

Speaker 2

AI generated code is dangerously deceptive because it is almost always syntactically correct, but it might be subtly logically flawed.

16:34

Speaker 1

So it looks right, but it behaves wrong.

16:36

Speaker 2

Exactly.

You have to be an expert in designing automated testing pipelines and runtime monitors to catch failure modes specific to generative outputs.

16:44

Speaker 1

So you're articulating intent, verifying the output, and meanwhile you have dozens of agents bumping into each other trying to execute these tasks, which means you also have to manage the traffic.

16:56

Speaker 2

That is the multi agent orchestration layer.

You are the conductor waving a baton at an orchestra.

You're an air traffic controller.

17:03

Speaker 1

That sounds stressful.

17:05

Speaker 2

You can be You have multiple autonomous vehicles moving at extremely high speeds, executing database rights and pushing commits.

Your job is to define the land graph edges and monitor the API budgets to ensure they don't crash into each other in the deployment pipeline.

17:20

Speaker 1

And finally, there is human judgement.

The AI doesn't understand business risk.

It doesn't understand regulatory compliance or brand reputation.

You are the final sign off.

You own the residual risk.

17:31

Speaker 2

Which brings up the irony of this entire paradigm shift.

To be an effective vibe engineer, traditional rigorous software practices are significantly more important now, not less.

The empirical evidence is crystal clear.

LMS actively reward disciplined engineering.

17:45

Speaker 1

Let's talk about the mechanics of why that is.

Take Test first development where you write a rigorous test suite before you even write the feature code.

17:52

Speaker 2

Oh, this is crucial.

17:54

Speaker 1

Right.

If you hand that test suite to an agentic system, it will absolutely fly.

It has a mathematical target to hit and it will self heal until it passes.

But if you skip the tests, the agent might hallucinate a patch for a bug and in the process silently break a completely unrelated feature.

18:12

Speaker 2

And without tests, you won't know until the client calls you screaming.

18:16

Speaker 1

Yeah, nobody wants that.

18:17

Speaker 2

The exact same logic applies to version control.

Agents are incredibly proficient at navigating git history.

If your commit messages are clean and granular, an agent can use a command like git bisect, which is essentially a binary search through your roject's history to track down the exact origin of a bug across 10,000 commits in seconds.

18:38

Speaker 1

But if your foundation is messy, if you just push massive undocumented changes all at once, the AI can't parse the history.

It will just rapidly multiply that mess at machine speed.

18:47

Speaker 2

Exactly.

The long term maintainability of your software depends entirely on your process infrastructure, your tests and your architecture.

It does not depend on the generative models raw coding capability.

18:59

When AI Optimizes Its Own Workflow Graphs

So what does this all mean?

We started by looking at a world where building software took half a year, required a massive budget and forced developers to manually type every single semi colon.

19:11

Speaker 2

And we unpacked how the industry tore down those monolithic hallucination prone AI models to build specialized multi agent teams that argue, collaborate and check each other's work.

19:21

Speaker 1

We explored how our ID E's evolved from simple text editors into automated background workspaces that heal their own bugs and trigger off server alerts.

And we mapped how rigorous SDLC workflows act as the crucial guardrails to keep that hyperfast machine from crashing into production.

19:37

Speaker 2

Ultimately, the discipline of software engineering is maturing.

We've solved the problem of generating text Code itself is no longer scarce.

It is an abundant, almost disposable resource.

But building reliable, scalable and complex systems is harder than ever.

19:51

Speaker 1

So it's not the end of the engineer.

19:53

Speaker 2

Not at all.

This transition to agentic engineering doesn't replace you, it amplifies your existing expertise.

It strips away the mechanical typing and forces you to focus entirely on system level architecture, semantic verification and accountable oversight.

20:08

Speaker 1

It makes you the true orchestrator.

But as we wrap up today's deep dive, I want to leave you, the listener, with a final, slightly provocative thought to Mull over.

20:18

Speaker 2

Well, this is a good one.

20:19

Speaker 1

We spent this entire time talking about how we, the humans, are designing these graphs, defining the boundaries for these agents, and orchestrating these automated workflows.

We are delegating the architecture, the coding and the code reviewing to the machine.

20:34

Speaker 2

Right.

And the capabilities of these multi agent systems are compounding daily.

20:38

Speaker 1

Exactly.

So if we are handing over all the mechanical execution to these highly specialized AI teams, how long until these agentic systems analyze their own bottlenecks and realize they can dynamically spawn their own new hyper specialized sub agents to solve problems we didn't even anticipate?

20:56

Speaker 2

How long until they start optimizing and rewriting their own workflow graphs without asking for our permission?

21:01

Speaker 1

Effectively evolving the fundamental laws of software engineering at a speed we can't even comprehend.

21:06

Speaker 2

The machine learning how to build a better machine.

21:09

Speaker 1

Definitely something to think about the next time you open a blank agentic tab and ask your AI to build an app.

Vibe Engineering: The End of Traditional Coding